The 2026 ACII Dyadic Contest (DaiKon) Workshop & Challenge

Introducing a newly emphasized dimension of affective behavior critical to social interaction

Panagiotis Tzirakis, Alice Baird, Jeffrey Brooks, Emilia Parada-Cabaleiro, Lukas Stappen, Sharath Rao, Theo Lebryk

Sponsored by Hume AI, the 2026 ACII Dyadic Contest (ACII-DaiKon) Workshop & Challenge introduces a novel benchmark for modeling interpersonal affect and social dynamics in dyadic conversations. While conversational affect modeling has advanced rapidly in recent years, most existing benchmarks and shared tasks remain largely speaker-centric, focusing on individual predictions rather than the coupled, time-evolving processes that arise between interacting individuals. In contrast, ACII-DaiKon emphasizes the relational and dynamic nature of human interaction, targeting phenomena such as directional influence, and emotional contagion. The challenge is structured as a three-track benchmark designed to capture these dynamics at scale, incorporating both temporal structure and cross-cultural variability.

To the best of the organizers’ knowledge, this is the first ACII workshop, and one of the first efforts in the broader machine learning community, to provide a dedicated, large-scale benchmark specifically focused on dyadic interpersonal dynamics.

Other Topics

For those interested in submitting research to the DaiKon workshop outside of the competition, we encourage contributions related to the following topics:

Modeling interpersonal affect and social dynamics

Dyadic and multi-party interaction modeling

Temporal modeling of affect trajectories

Cross-modal fusion for social signals (audio, video, text, physiology)

Representation learning for social and emotional behavior

Cross-Cultural Dyadic Interactions

Submission details TBA.

Important Dates (AoE)

Challenge Opening (data and baselines released): April 6, 2026

Baseline Paper released: April 20, 2026

Test set released: May 20, 2026

All Tracks submission deadline: May 25, 2026 (submit test set labels to competition@hume.ai)

Workshop paper submission: May 30, 2026

Notification of Acceptance/Rejection: July 3, 2026

Camera Ready: July 10, 2026

Conference: 7-10 September, Pueblo Mexico

Challenge Tasks

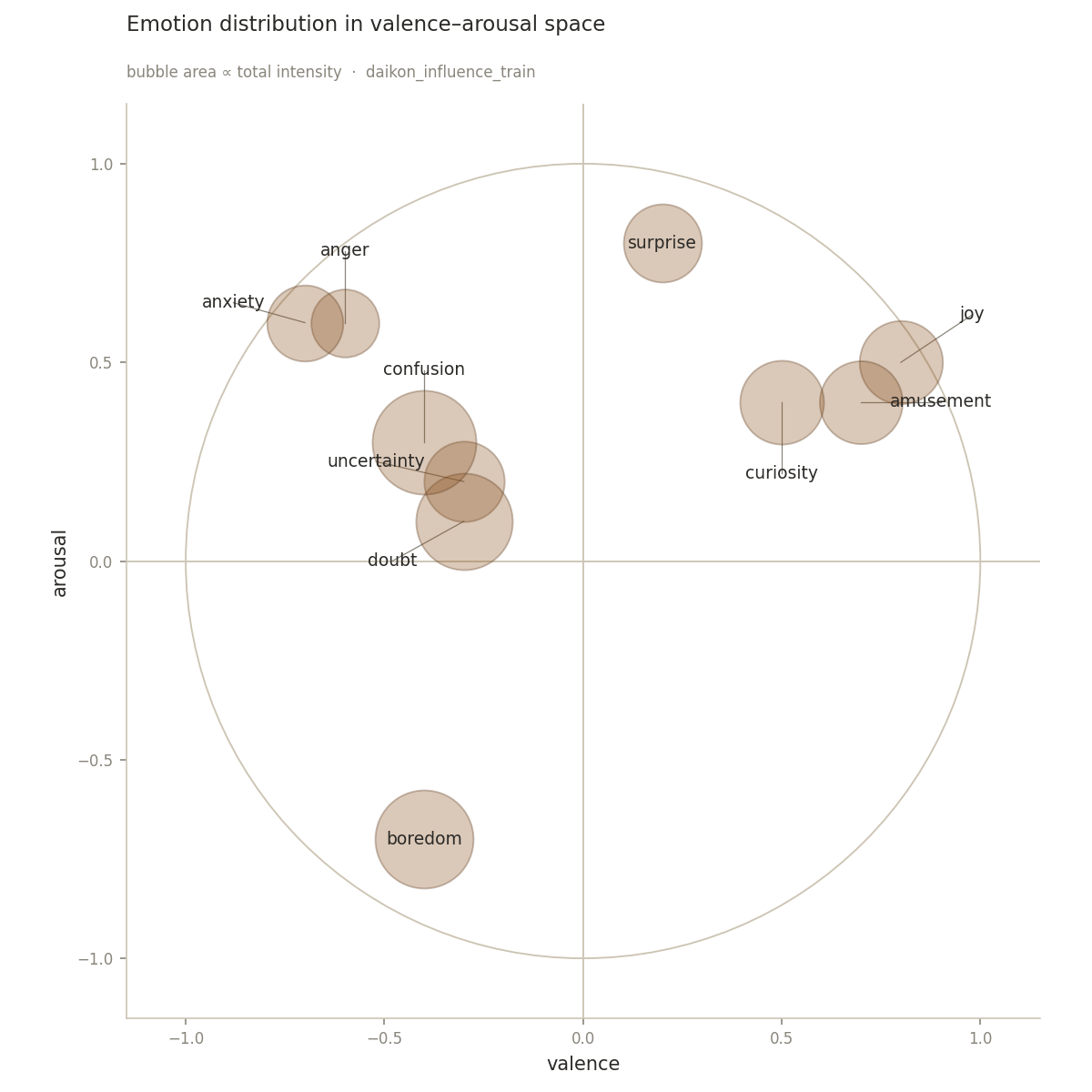

The Influence Sub-Challenge, a dyadic affect prediction task (DaiKon Influence). In the DaiKon Influence sub-challenge, participants will predict a target speaker's affective state for each labeled speech segment in a dyadic conversation. Given the multimodal conversational context, systems will output continuous intensity estimates for 10 emotion dimensions for the target segment: anger, anxiety, uncertainty, confusion, doubt, boredom, surprise, curiosity, joy, and amusement. Participants will report Concordance Correlation Coefficient (CCC), as well as Pearson correlation, averaged across the target emotion dimensions.

The Turn-Taking Sub-Challenge, a conversational timing and speaker prediction task (DaiKon Turn Taking). In the DaiKon Turn Taking sub-challenge, participants will predict who speaks next and when the next speech onset occurs. Systems will output (i) next-speaker prediction as a classification task and (ii) time-to-next-speech as a regression task. Participants will report Macro-F1 and accuracy for next-speaker prediction, and MAE for time-to-next-speech.

The Rapport Sub-Challenge, a time-evolving interaction quality prediction task (DaiKon Rapport). In the DaiKon Rapport sub-challenge, participants will predict rapport for labeled windows throughout a dyadic conversation, rather than only a single conversation-level score. Given the multimodal conversational context, systems will output a continuous rapport score for each labeled window. Participants will report Concordance Correlation Coefficient (CCC), as well as Pearson correlation, between predicted and ground-truth rapport scores, averaged across conversations.

Challenge Baselines

The organizers have prepared a set of multimodal baselines for each of the three tasks. To reproduce baselines, please find the code at github.com/HumeAI/competitions.

| Sub-Challenge | Modality | Val | Test |

|---|---|---|---|

| Influence (CCC/Pearson) | Audio | 0.39 / 0.50 | 0.40 / 0.50 |

| Video | 0.17 / 0.29 | 0.19 / 0.30 | |

| Multimodal | 0.39 / 0.50 | 0.40 / 0.50 | |

| Turn-Taking (Macro-F1/MAE) | Audio | 0.61 / 1.53 | 0.66 / 1.50 |

| Video | 0.51 / 1.57 | 0.51 / 1.55 | |

| Multimodal | 0.61 / 1.53 | 0.63 / 1.50 | |

| Rapport (CCC/Pearson) | Audio | 0.65 / 0.67 | 0.68 / 0.70 |

| Video | 0.23 / 0.28 | 0.26 / 0.31 | |

| Multimodal | 0.58 / 0.63 | 0.59 / 0.64 |

The Challenge Dataset

The Daikon Challenge dataset consists of 945 sessions, and a total of 743 hours from 5 countries. The challenge data is a subset of a larger corpus curated by Hume AI, designed to capture rich, naturalistic human interactions across diverse settings and populations.

All sessions were originally captured as dual-channel audio recordings using a proprietary recording platform developed and hosted by Hume AI. This setup enables high-quality separation of speaker streams, supporting detailed analysis of conversational dynamics.

Participants are recruited from multiple countries, ensuring linguistic and cultural diversity within the dataset. All participants provided informed consent prior to data collection, and the dataset was assembled in accordance with applicable ethical guidelines and data protection standards.

For inquiries regarding Hume AI Dual Channel Data please reach out at: link.hume.ai/sales-partnerships-form

| Split | Rooms | Hours | DE | EN | ES | NL | PL |

|---|---|---|---|---|---|---|---|

| Train | 661 | 504.3 | 46 | 328 | 111 | 67 | 107 |

| Val | 142 | 118.9 | 10 | 75 | 26 | 11 | 18 |

| Test | 142 | 120.2 | - | - | - | - | - |

Figure 1. Aggregate emotion intensity of the training split, projected onto Russell's valence–arousal plane; bubble area is proportional to the summed soft-label intensity of each emotion across all segments.

Figure 2. A 10x10 grid of randomly selected frames from participant videos in the Daikon Challenge dataset.

Team Registration

To gain access, register your team by emailing competitions@hume.ai with the following information:

Team Name, Researcher Name, Affiliation, and Research Goals

Restricted Access: After registering your team, you will receive an End User License Agreement (EULA) for signature. Please note that this dataset is provided only for ACII DaiKon Challenge use.

Results Submission

For each task, participants should submit their test set prediction as a zip file to competitions@hume.ai.

Organizers

Dr. Panagiotis Tzirakis. Hume AI, New York, USA. panagiotis@hume.ai. [Main Contact] Panagiotis Tzirakis is an AI research scientist working at the intersection of multimodal deep learning, affective computing, and audio-visual representation learning. He obtained his Ph.D. from Imperial College London (iBUG) in 2021, where he contributed to scalable, end-to-end multimodal emotion recognition and real-world affect modeling. He publishes in leading journals and conferences including Information Fusion, International Journal of Computer Vision, ICASSP, INTERSPEECH, and ACM Multimedia (i10-index: 38). He has co-organized several workshops and challenges, including ACII-VB’22, ICML ExVo’22, and CVPR ABAW’25, ’26.

Dr. Alice Baird. Hume AI, New York, USA. alice@hume.ai. Alice Baird is an AI researcher specializing in computational paralinguistics and affective computing, with a focus on stress and emotional well-being. She received her PhD in 2021 from the University of Augsburg’s Chair of Embedded Intelligence for Health Care and Wellbeing. Her work on emotion understanding from speech, physiological, and multimodal signals, and has been widely published in leading venues such as INTERSPEECH, ICASSP, IEEE Intelligent Systems, and the IEEE Journal of Biomedical and Health Informatics (i10-index: 68). She has also co-organized international workshops and challenges, including the 2022 ACII Affective Vocal Bursts Workshop and Challenge.

Dr. Jeffrey Brooks. Hume AI, New York, U.S.A. jeff@hume.ai. Jeffrey Brooks is a computational emotion scientist with expertise in emotional expression, computational affective neuroscience, and emotional AI. He completed his PhD at New York University in 2021. His work on emotional expression and recognition in the face and voice has been published in leading interdisciplinary journals such as Nature Human Behaviour and Proceedings of the National Academy of Sciences (i10-index: 20).

Dr. Emilia Parada-Cabaleiro, University of Music Nuremberg, emiliaparada.cabaleiro@hfm-nuernberg.de Emilia Parada-Cabaleiro received her PhD in 2016 from the University of Rome Tor Vergata, Italy. She is a music therapist, elementary music educator, and musicologist. Her research interest lay at the intersection between Psychology, Musicology, and Computer science, with a particular focus on affective computing. Her work on emotion and speech modeling has been widely published in leading international venues, including INTERSPEECH and other top conferences in speech and affective computing (i10-index: 33).

Dr. Lukas Stappen, BMW Group, Munich, Germany lukas.stappen@bmw.de. Lukas Stappen is an AI researcher with expertise in multimodal learning, affective computing, and large language models. He obtained his PhD from the University of Augsburg in 2021, where he contributed to human-centric multimodal understanding and also (co-)organized international workshops and challenges, including founding the MuSe Challenge series (2020-2024) for advancing multimodal sentiment analysis. His current interest focuses on LLM-based voice assistants and AI safety. His work has been widely published in leading venues, such as IEEE Transactions on Affective Computing, ACM Multimedia, ACL, INTERSPEECH, and ICASSP (i10-index: 26).